In addition to general obstacle recognition, the ET7's LiDAR has an ROI function that enables detection of objects that are difficult for cameras to recognize.

After briefly introducing the ET7 sedan's LiDAR in a video yesterday, Nio is introducing the device in detail today through an article.

"What makes a high-performance LiDAR?" reads the first paragraph of the article.

The LiDAR on board the Nio ET7 contains a multiplexed 1550nm narrowband laser and receiver system.

The laser generates and emits light pulses, which pass through an internal high-speed optical rotating mirror system to hit objects at different locations in space and reflect them back, and are eventually received by the receiver, completing a scan over the entire field of view.

The receiver calculates the distance between the vehicle and the reflected object by measuring the propagation time of the light pulse from emission to being reflected back.

So the world in its eyes is made up of a point cloud formed by the return of this one light pulse.

So, what makes Nio ET7's LiDAR, see far, see clearly and see more?

The following is a translated version of the main textual content from Nio (NYSE: NIO, HKEX: 9866).

Ultra-long detection distance of 500 meters

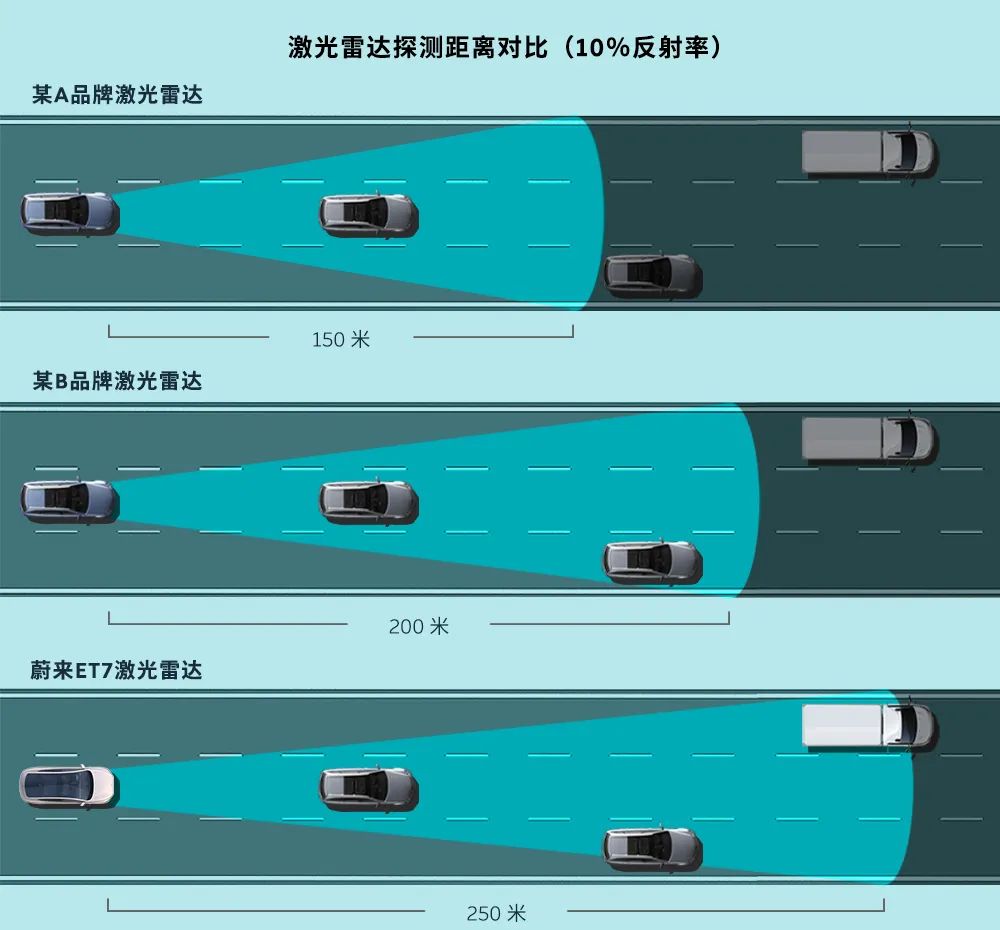

Nio ET7's LiDAR can detect up to 500 meters and 250 meters under the 10 percent reflectivity standard, which is an industry leader.

The ability of LiDAR to see far enough depends mainly on the power.

Nio ET7's LiDAR laser wavelength is 1550nm, which will be absorbed by the lens and cornea when passing through the human eye, so it will not cause damage to the retina and has better safety.

Therefore, 1550nm LiDAR can allow higher power output and longer detection distance.

In the process of driving, if the obstacle in front can be detected 100 meters in advance, this 100 meters on the road, especially at high speed, can buy extremely valuable reaction and braking time.

Ultra-high resolution

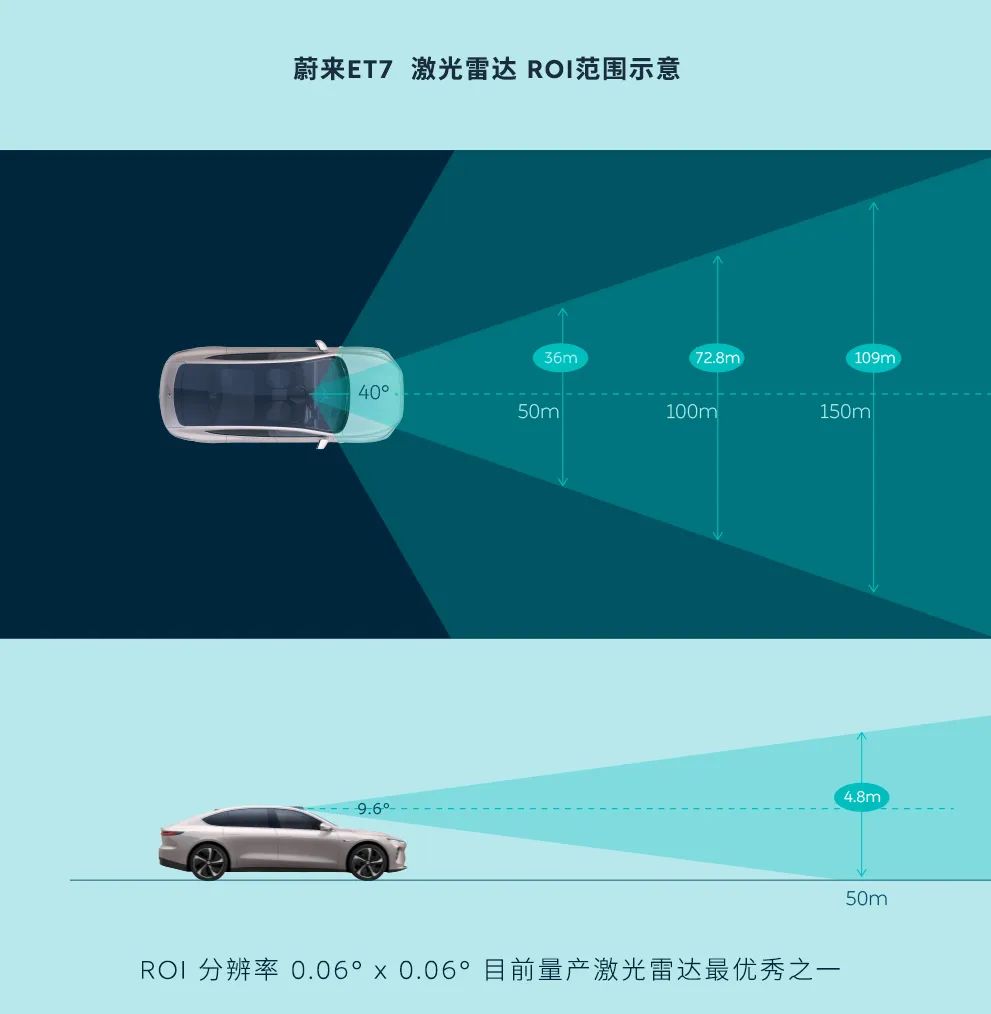

Thanks to the high-speed laser and fast mirror system, Nio ET7's LiDAR can generate high-density point clouds locally in the field of view through the Region of Interest (ROI) function to obtain more accurate 3D information.

Nio ET7's LiDAR ROI area can reach an ultra-high angular resolution of 0.06°x0.06°, which is one of the best LiDARs for vehicles in mass production today.

It can better track vehicles and pedestrians, or adjust to the slope of the road by turning, improving the reliability and safety of autonomous driving and seeing everything that the naked eye misses.

Taking LiDAR with 0.1° angular resolution as an example, it receives two adjacent points separated by 35cm, and the point cloud is too sparse for target objects like pedestrians, bicycles, and motorcycles, which is extremely challenging for the algorithm.

The field of view of Nio ET7 LiDAR is 120° (lateral) x 25° (vertical), while the range of ROI is 40° (lateral) x 9.6° (vertical), forming an ultra-high angular resolution of 0.06° x 0.06° in this area to fully cover the road ahead.

In addition to the general obstacle recognition, ET7's LiDAR has an ROI function that enables detection of objects that are difficult to recognize by cameras, such as thrown objects, falling rocks, and tires.

Detecting in dark and dazzling light environments

Nio's vision in developing autonomous driving technology is to make traffic safer and more efficient, freeing up users' time.

As road conditions become more complex, the camera and millimeter wave radar solution has difficulty in making timely, stable and reliable recognition in dazzling light, dark light or when there are stationary obstacles ahead under current technology conditions.

In these conditions, LiDAR has significant advantages.

By collecting reflected light pulses, LiDAR can output 3D spatial data to effectively identify road bumps, missing manhole covers, spills, large stationary obstacles and other targets that are currently difficult to identify by cameras, which is of great value to enhance the safety of autonomous driving and automatic assisted driving.

Therefore, in the current technical environment, LiDAR is an important supplement to the perception system of autonomous driving and automatic-assisted driving.